Ikusi, empresa de Velatia, ofrece servicios tecnológicos, es una compañía especializada en digitalización y ciberseguridad

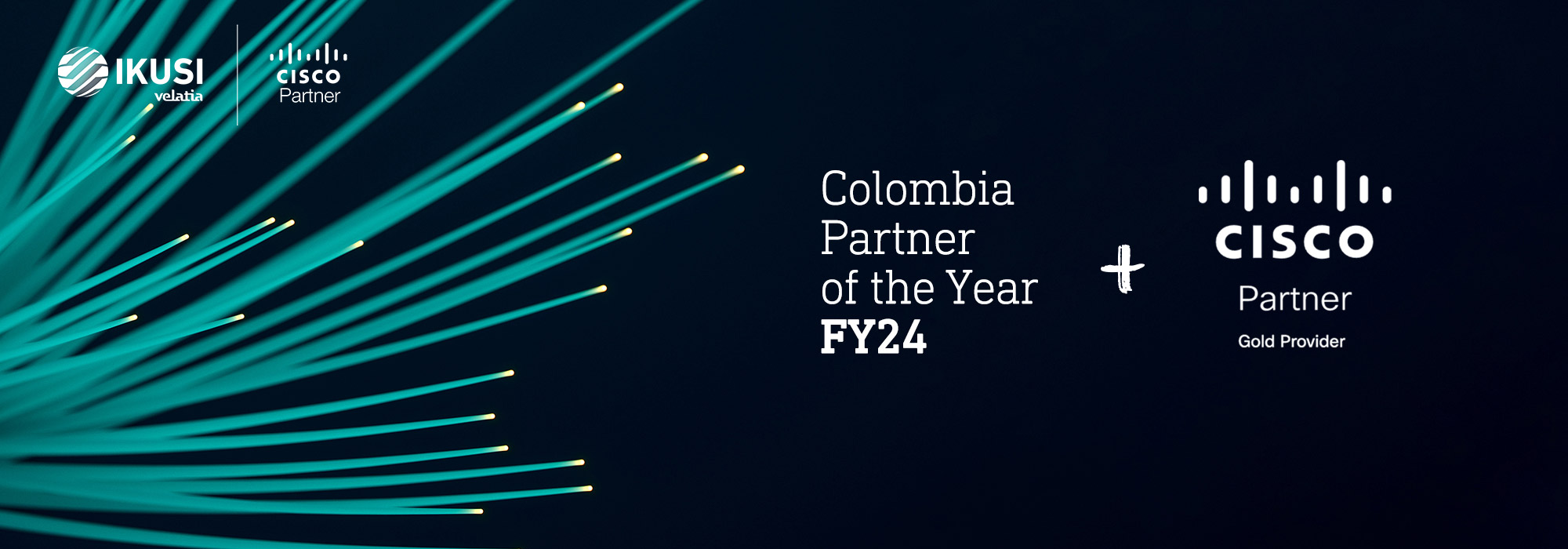

Ikusi, empresa de Velatia, es una compañía de servicios avanzados de tecnología, especializada en el ámbito de la digitalización y ciberseguridad. Líder en el diseño, implementación y gestión de infraestructuras de comunicaciones, ofrece a las organizaciones redes empresariales eficientes, robustas y seguras. Con una trayectoria de más de 20 años, cuenta con presencia en México, Colombia y España y con más de 900 profesionales expertos en el sector.